- What is Generative Engine Optimization (GEO) and the 9 Ways to Do It - March 26, 2025

- 12 Best Product Tours Software - November 18, 2024

- 24+ Best Webinar Software Platforms For Every Business in 2025 (Ranked & Reviewed) - October 19, 2024

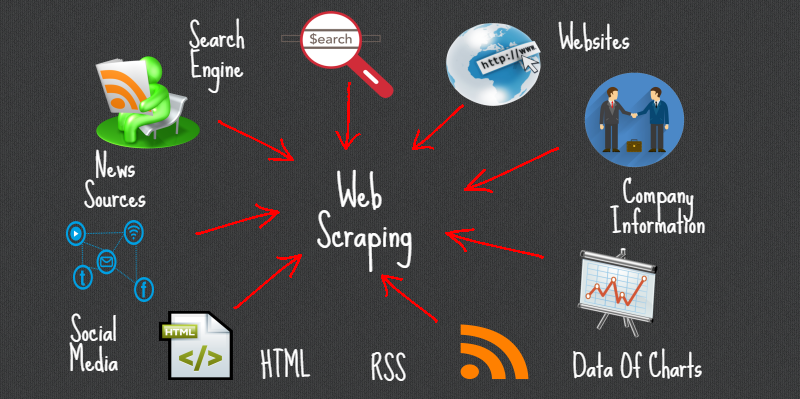

Web scraping is a term frequently used by people who live and breathe data.

But like all business buzzwords, web scraping sounds confusing if you’re not familiar with it. So what exactly is web scraping, and why should you use it?

Table of Contents

What is Web Scraping?

Web scraping is the process of extracting data from different websites or sources, including images, videos, text, and more.

A piece of code is used to “scrape” the source you’re looking at, and then it generates a document with the data based on the results.

For example, an online retailer might use web scraping to view their competitor’s prices, or a SaaS company might use web scraping to capture email leads.

There’s countless ways to use web scraping, and it’s a very common practice for most businesses.

How Do You Use a Web Scraping Tool?

You use a web scraping tool to extract large amounts of data from websites quickly and easily. It also allows you to monitor your brand’s online reputation, and see what your competitors are up to.

1 – Efficiency

What would take several people hours, even days to do manually, scraping tools can do in minutes. Not only that, but you can have the data in front of you in a consistent and easy to work with format. While it was featured on the Great and Powerful Moz a few years back, many marketers still do not leverage web scraping.

2 – Brand Monitoring

With a web scraping tool, it’s easy to keep tabs on your company’s online reputation. You can monitor social conversations to determine what your customers are saying about you. With that information, you can easily respond to people who had something negative to say.

3 – Competitive Analysis

Marketers spend a lot of time spying on their competitors. So instead of doing that manually, a web scraping tool can automate the process. You can choose exactly which platforms you want to monitor—a competitor’s website, their social media profiles, etc.—and get the results you want, when you want them.

The Best Web Scraping Tools and Software

Now that I’ve opened up your eyes to the possibility of scraping data from hundreds or thousands of sites in a matter of minutes, here is a list of the best scraping tools for the job.

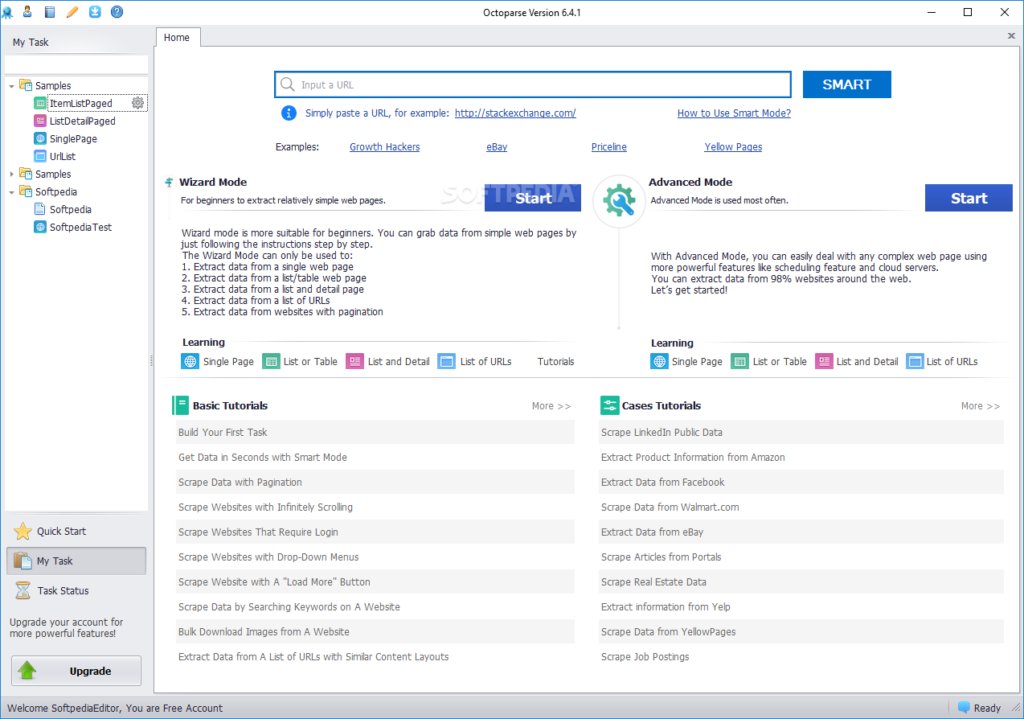

1. Octoparse

About:

Octoparse is a visual scraping tool that makes harvesting large amounts of data quick, efficient, and most importantly of all – easy.

One of their marketing taglines, that I thought was spot on is, “Turn web pages into structured spreadsheets within clicks.” Exactly what you want from web scraping software, and it lives up to its billing.

You don’t need to understand how to code to use this tool, just simply fill out some parameters and let Octoparse do the work.

Ease of Use:

- User-friendly point-and-click interface

- Smooth workflow visuals

- Simply input a few search terms and you can start scraping

Features:

- Able to scrap from any type of dynamic website

- Cloud extraction for fast processing

- Can set up scheduled extractions

Price:

Their free plan is fairly generous, allowing 10 crawlers and some large reporting. Once you outgrow these reporting limitations, their plans are $75 per month their Standard Plan or $209 per month for their Professional Plan.

Bottom Line:

Octoparse is up there with the best of the best web scraping tools and software. The user-friendly interface makes it a breeze to pick up and start using, and the deeper you dig into the toolkit the more tools you find.

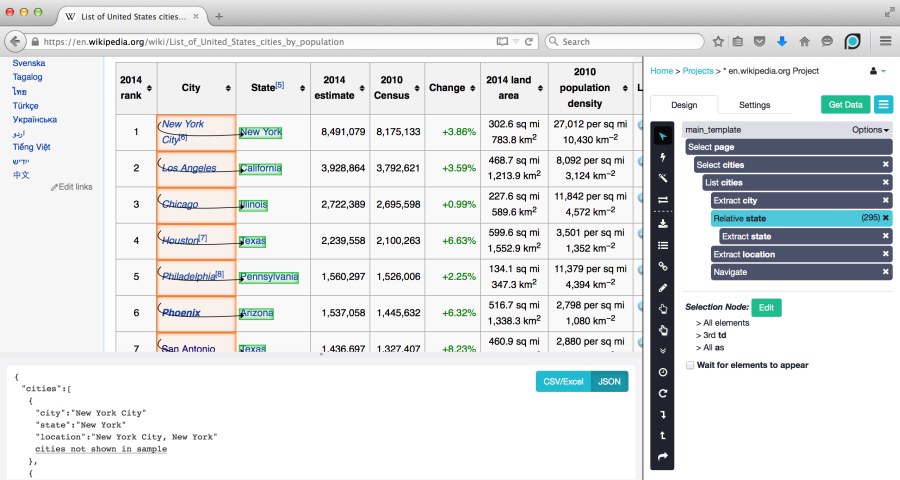

2. ParseHub

About:

ParseHub is a powerful web scraping tool that enables you to build your own web scrapers without the need for any coding knowledge. You can start scraping a site by typing in the URL and letting this software get to work.

ParseHub has a deep pool of advanced features, but don’t let that intimidate you. It’s incredibly easy to use on the most basic scraping level, and they have a detailed library of tutorials if there’s something you can’t figure out.

You can schedule runs, set conditions and rules, pull data from images, maps, tables, and other rich forms, and loads more. You can export data in JSON or Excel format for analyzing.

Ease of Use:

- Super easy to get going, just add a URL and click “Start Project”

- Extract data in different easy-to-use formats

- Smooth interface and navigation

Features:

- Automatic IP rotation

- Effective at scraping all forms of rich or dynamic data

- Clients for Windows, Mac OS, and Linux

Pricing:

You can scrape 200 pages of data in less than 40 minutes with their free plan. Their Standard Plan costs $149 per month and reduces that time to 10 minutes while upping your other allowances. Power users will want their $499 per month Professional Plan.

Bottom Line:

ParseHub is one of the most powerful scraping desktop applications able to scrape a wide range of data types, and it’s reasonably priced. There’s no reason not to try their free trial – if you’ve got those 40 minutes to spare, that is.

3. ScrapeSimple

About:

“ScrapeSimple is a service that builds web scrapers and periodically emails you a CSV with the information you need.”

That’s their own words, and it sums up this scraping tool perfectly. ScrapeSimple is, as the name suggests, a scraping tool that’s easy to use.

All you do is fill out the information you want to scrape from a site, input the URL, and set the software to the task. You can set up a rule to periodically deliver reports to your inbox, giving you a set-it-and-forget-it option.

Ease of Use:

- Just fill out 4 forms to start scraping

- Their team work on the backend to produce your reports

- Receive data in your preferred format

Features:

- Reports go to your inbox when complete

- Limited features compared to other tools and software

Pricing:

There are no set pricing plans or a cost per usage like most scrapers. You need to provide all the sites you want scraping, and they’ll get back to you with a cost and time estimate.

Bottom Line:

If you have a list of sites that you want to scrape periodically and don’t want to micro-manage the process yourself, ScrapeSimple is probably the tool for you. For those with ad-hoc needs, it’s just not flexible enough.

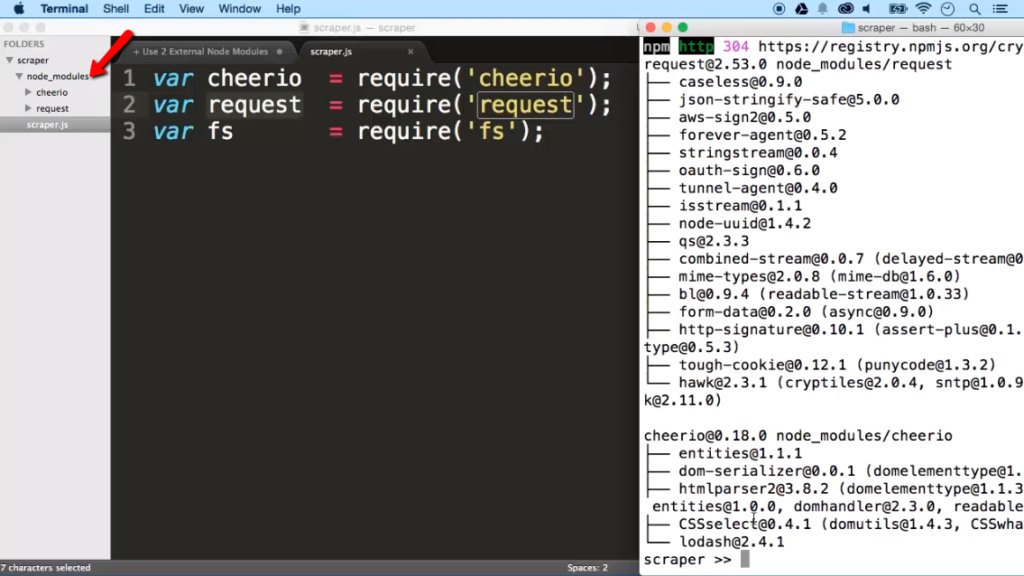

4. Cheerio

About:

Cheerio provides, “Fast, flexible, and lean implementation of core jQuery designed specifically for the server.”

This software efficiently parses XML and HTML documents and allows you to analyze web pages using a jQuery-like syntax. The Cheerio API is similar to jQuery, so if you’re experienced with that, you’ll pick this up right away.

It’s important to note that jQuery is only usable inside the browser, so you can’t use it for direct web scraping.

Ease of Use:

- Knowledge of jQuery will help you make the most of this software

- Can only use jQuery inside a browser

- Lacking some automation features seen as part of other scraping software

Features:

- Familiar syntax – cleans the jQuery library

- Efficient and fast

- Can parse almost any XML or HTML document

Pricing:

Cheerio is free to use and is available through GitHub.

Bottom Line:

If you want to get your hands dirty and have better control over how you’re scraping and the information you’re looking for, Cheerio allows this. It’s not for beginners or anyone looking to quickly scrape a static URL for a bit of information.

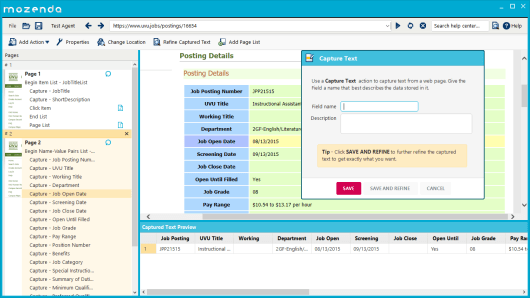

5. Mozenda

About:

With billion-dollar companies like Cisco Systems on their testimonial page stating that they increased their revenue “significantly” using Mozenda, you’d be crazy not to take a closer look.

Mozenda is a cloud-based scraping software that enables businesses to scrape key data on the internet to use to their advantage. They provide API access, have inbuilt storage integrations, like Amazon S3, Dropbox, FTP, and more.

They don’t just build the scrapers to help you get the information you’re after, Mozenda also helps you clean up raw data. There offer some flexible and detailed scraping solutions

Ease of Use:

- Easy point-to-click interface

- You’ll need some basic coding skills to make the most of this software

Features:

- You can export data in CSV, JSON, XLSX, or XML format

- Integrates with a wide range of applications

- Range of managed solutions for large bespoke projects

Pricing:

Pricing starts at $250 per month, so it’s on the expensive side. There are more pricing tiers of $350 and $450+ per month. You can try free for 30 days, however, so that’s more than long enough to get a feel for how it works.

Bottom Line:

Mozenda is up there with the best tools based on the suite of features you have at your disposal. If you’ve ever used a scraping tool and found yourself wanting more, I’d take a 30-day trial with Mozenda.

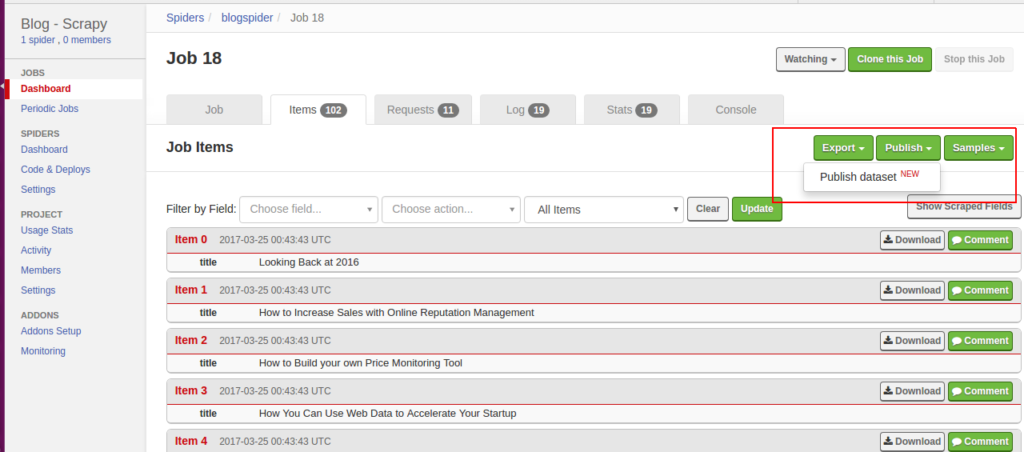

6. ScrapingHub

About:

If you want to, “Gain a competitive edge with the world’s leading web scraping services and tools,” then look no further than ScrapingHub.

ScrapingHub is a cloud-based data extraction tool that uses Crawlera, a smart HTTP/HTTPS downloader designed for web crawling. Crawlera is able to bypass a lot of the bot counter-measures in place to block scrapers, increasing the chance of returning the data you want.

On face value, you can convert entire web pages into organized content ready to manipulate as per your project needs. Or, you can dig deeper into their developer tool kit to perform bespoke scraping missions.

Ease of Use:

- Some basic understanding of HTML is beneficial

- Easy to navigate dashboard and UX

- See data in a clean and easy to use format

Features:

- Easily monitor pricing and product info for your competitor’s

- Quickly aggregate information and statistics in real time

- Perform competitive market research using their scraping tools

Pricing:

Pricing starts at $9 per month for their basic services, going up to $25 per month to use their Crawlera-powered scrapers.

Bottom Line:

If your goal is to use a web scraping tool to replace some of the manual operations within your business, ScrapingHub is going to save you a lot of time and money. Their scraping technology is fast, efficient, and comes in at a competitive price.

Conclusion

Web scraping allows you to extract any type of data you want. If you’re in the market for a web scraping tool, we recommend looking into Octoparse. It’s an easy-to-use platform with a generous free plan, and comprehensive features to help you make the most of your data.